When

I started my 1st job in 2007 as part of a graduate programme I was signed up as

a Java developer on the Java skills track. After joining, due to having a

degree in engineering which involved software and hardware, I was instead put

on a different skill track called “Development Architecture” or “DevArch” for

short as part of a group called “Development Control Services” which

specialised in automating configuration and environment management

workflows for projects. Many of those in my starter group who were in a

similar position, complained that this was not what they signed up for and

wanted to be developers. At this point I would like to tell you I stuck

with it due to my vision and belief that this was indeed the future but this

wasn’t the case, like many graduates i wasn't really sure what I wanted to do

long term. However, in time I became passionate about the job and I loved the

fact that you never did the same activity for more than a few days in a row.

The variety and creative freedom the job brought, along with the ability to help others

do their jobs and make a real difference to projects was certainly appealing.

When

I started my 1st job in 2007 as part of a graduate programme I was signed up as

a Java developer on the Java skills track. After joining, due to having a

degree in engineering which involved software and hardware, I was instead put

on a different skill track called “Development Architecture” or “DevArch” for

short as part of a group called “Development Control Services” which

specialised in automating configuration and environment management

workflows for projects. Many of those in my starter group who were in a

similar position, complained that this was not what they signed up for and

wanted to be developers. At this point I would like to tell you I stuck

with it due to my vision and belief that this was indeed the future but this

wasn’t the case, like many graduates i wasn't really sure what I wanted to do

long term. However, in time I became passionate about the job and I loved the

fact that you never did the same activity for more than a few days in a row.

The variety and creative freedom the job brought, along with the ability to help others

do their jobs and make a real difference to projects was certainly appealing. My

first project at my new job was working to build and deploy the norwegian

pensions website and I was tasked with creating continuous integration

processes using a relatively new tool called “CruiseControl”. In that role i

looked after deploying configurable items such as code and related config

through environments that had multiple test quality gates “component test”,

“integration test”, “pre-production” and “production” environments using our in

house build and deployment tool.

My

first project at my new job was working to build and deploy the norwegian

pensions website and I was tasked with creating continuous integration

processes using a relatively new tool called “CruiseControl”. In that role i

looked after deploying configurable items such as code and related config

through environments that had multiple test quality gates “component test”,

“integration test”, “pre-production” and “production” environments using our in

house build and deployment tool.  This team

sounds a lot like a DevOps initiative today that is being branded a “fad” or

“new philosophy” when in reality it has always been a core component of the

software delivery. Every project I have worked in since that very first one, I strive to encourage the same principles of knowledge sharing and learning.

When you couple this with individuals that are respectful of each other, keep

calm under pressure and operate without ego or want to be heroes then great

things can happen. All this contributes to a team dynamic that allows teams to

be more productive, deliver products to market faster and allows people to

enjoy their jobs. The want for repeat-ability and predictability in processes

are not new aims and are still as valid in 2015 as they were then in 2007. This is not magical or mythical or unicorns it is completely achievable.

This team

sounds a lot like a DevOps initiative today that is being branded a “fad” or

“new philosophy” when in reality it has always been a core component of the

software delivery. Every project I have worked in since that very first one, I strive to encourage the same principles of knowledge sharing and learning.

When you couple this with individuals that are respectful of each other, keep

calm under pressure and operate without ego or want to be heroes then great

things can happen. All this contributes to a team dynamic that allows teams to

be more productive, deliver products to market faster and allows people to

enjoy their jobs. The want for repeat-ability and predictability in processes

are not new aims and are still as valid in 2015 as they were then in 2007. This is not magical or mythical or unicorns it is completely achievable. At

that time in 2010 cloud computing was still taking off. Any experienced

engineer worth their salt working in the configuration management space could

see that cloud infrastructure would give an adrenaline shot to “Development

Architecture” but I don’t think anyone imagined it would see the boom it has

today. The principle was that the expensive 12 man environment management team

that was required per project, to manually build infrastructure, could be

replaced by a smaller team and virtualised infrastructure. Instead a set of

API’s and scripts could be created by a few highly skilled resources that

understood infrastructure and used to provide infrastructure as a service once

cloud platforms had stabilised and actually worked as they should. Like many

other early adopters of cloud technologies we had lots of fun using many of the

first attempts at cloud from vendors which were very unstable and buggy.

At

that time in 2010 cloud computing was still taking off. Any experienced

engineer worth their salt working in the configuration management space could

see that cloud infrastructure would give an adrenaline shot to “Development

Architecture” but I don’t think anyone imagined it would see the boom it has

today. The principle was that the expensive 12 man environment management team

that was required per project, to manually build infrastructure, could be

replaced by a smaller team and virtualised infrastructure. Instead a set of

API’s and scripts could be created by a few highly skilled resources that

understood infrastructure and used to provide infrastructure as a service once

cloud platforms had stabilised and actually worked as they should. Like many

other early adopters of cloud technologies we had lots of fun using many of the

first attempts at cloud from vendors which were very unstable and buggy. Now

this brings us to 2015 and the next blocker after infrastructure is networking,

teams need an easy way to consume networking like they do infrastructure as

waiting for a networking team to service tickets will just not do and a process

is only as quick as it’s slowest component. This has seen a wealth of “software

defined networking” technologies burst onto the scene with Nuage, NSX, ACI,

Contrail, Plumgrid and OpenDayLight to name but a few. This is extending the

configuration management remit once again this time to include network

configurable items and manage the network like we first did with code and then

the underlying infrastructure. It is a natural progression and makes for

exciting times.The potential to simplify data center deployments using software defined networking is massive. A lot of complexity of networking is brought in by security requirements so putting the network in software opens up many possibilities to keep the networking as simple as possible, while at the same time adhering to all security requirements.

Now

this brings us to 2015 and the next blocker after infrastructure is networking,

teams need an easy way to consume networking like they do infrastructure as

waiting for a networking team to service tickets will just not do and a process

is only as quick as it’s slowest component. This has seen a wealth of “software

defined networking” technologies burst onto the scene with Nuage, NSX, ACI,

Contrail, Plumgrid and OpenDayLight to name but a few. This is extending the

configuration management remit once again this time to include network

configurable items and manage the network like we first did with code and then

the underlying infrastructure. It is a natural progression and makes for

exciting times.The potential to simplify data center deployments using software defined networking is massive. A lot of complexity of networking is brought in by security requirements so putting the network in software opens up many possibilities to keep the networking as simple as possible, while at the same time adhering to all security requirements. Not

everyone is well versed in configuration management and the principles though.

I have seen developers and operations staff discuss things like “DevOps teams”

and be very disparaging. I put this down to a fundamental lack of understanding

on their part, wrought out of fear, that this new buzz word called “DevOps”

will end all that they hold dear and somehow take away their jobs or

livelihood, which of course is nonsense. In 2007 when I was doing “DevOps”

before it was called that and still called “Configuration Management” or “Release

Management” we still had a development team and operations team those roles

still stand and are not going anywhere they are just evolving like the

technology landscape. This means embracing change though, maybe learning some

scripting or at least a DSL, changing the way you work and questioning what you

have done before and being open to new ideas and learning these new skills.

Not

everyone is well versed in configuration management and the principles though.

I have seen developers and operations staff discuss things like “DevOps teams”

and be very disparaging. I put this down to a fundamental lack of understanding

on their part, wrought out of fear, that this new buzz word called “DevOps”

will end all that they hold dear and somehow take away their jobs or

livelihood, which of course is nonsense. In 2007 when I was doing “DevOps”

before it was called that and still called “Configuration Management” or “Release

Management” we still had a development team and operations team those roles

still stand and are not going anywhere they are just evolving like the

technology landscape. This means embracing change though, maybe learning some

scripting or at least a DSL, changing the way you work and questioning what you

have done before and being open to new ideas and learning these new skills.

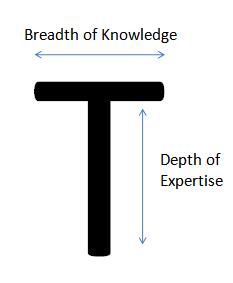

In

2007 the successful project team had roles that spanned different skill sets we

set out to have “T shaped” people. A slightly rubbish and consultancy type

analogy maybe, but a “T shaped person” had deep dive knowledge in 1 expert area

which was the main reason they were part the team so that is the depth of the

T. That person though also had a breadth of knowledge in other areas which made

up the breadth of the T. So taking myself as an example I was an expert in

automation but had a breadth of knowledge in infrastructure, networking and

storage. Each person in that team cross skilled with other T shaped

experts and shared information to the point the breadth of skills across the

team and in each individual's T became better and better and wider and wider.

This is what DevOps is all about, creating true multi-discipline teams and

sharing information to build new skills.

Recently

have even seen Amazon offering absurd certifications on DevOps and it really

irritates me. DevOps doesn't mean a team, it is a mindset about sharing

information and collaborating with others so we can deliver software to market

quicker and become better engineers. By definition a certification in DevOps

should really be granted to any child that works with other children to build a

spaceship with lego as they are successfully working together to build

something. But if organisations form a team and call it the “DevOps Team” don't

get caught up too much in debating if this is the wrong approach as it may

create another silo. Instead be positive and thankful that the company have at

least considered doing the correct thing even if they may not fully understand

what they want to achieve. But hopefully the experts that they recruit will

show them the correct way.

Recently

have even seen Amazon offering absurd certifications on DevOps and it really

irritates me. DevOps doesn't mean a team, it is a mindset about sharing

information and collaborating with others so we can deliver software to market

quicker and become better engineers. By definition a certification in DevOps

should really be granted to any child that works with other children to build a

spaceship with lego as they are successfully working together to build

something. But if organisations form a team and call it the “DevOps Team” don't

get caught up too much in debating if this is the wrong approach as it may

create another silo. Instead be positive and thankful that the company have at

least considered doing the correct thing even if they may not fully understand

what they want to achieve. But hopefully the experts that they recruit will

show them the correct way. Adopting a DevOps

mind-set should mean the polar opposite of sitting in an ivory tower and

pointing someone towards a ticketing system, when you can’t be bothered to talk

to them and help them solve their problem, it isn’t shut-up and forget for a

day. It isnt about using existing process as an excuse for something failing, not helping someone do their job, if that happens frequently

it needs to be challenged and changed. If you

do have a problem with DevOps then you have an issue with sharing information,

self-improvement and talking with people which is absurd and you are in the

wrong job. DevOps is about working together, challenging the status quo if

something doesn’t make sense and is blocking productivity. It promotes the

simple option to speak to other teams and change things free of the

bureaucratic processes that inhibit us in IT.

Adopting a DevOps

mind-set should mean the polar opposite of sitting in an ivory tower and

pointing someone towards a ticketing system, when you can’t be bothered to talk

to them and help them solve their problem, it isn’t shut-up and forget for a

day. It isnt about using existing process as an excuse for something failing, not helping someone do their job, if that happens frequently

it needs to be challenged and changed. If you

do have a problem with DevOps then you have an issue with sharing information,

self-improvement and talking with people which is absurd and you are in the

wrong job. DevOps is about working together, challenging the status quo if

something doesn’t make sense and is blocking productivity. It promotes the

simple option to speak to other teams and change things free of the

bureaucratic processes that inhibit us in IT.

On

the other hand DevOps isn’t about re-inventing the wheel and is sometimes about

doing the most simple thing, using the simplest solution, over complex

processes equally hinder the ability to deliver software quicker so processes

need to be lean and quick. It is also about seeing if there are gaps where we can

build tools that can make our jobs easier daily and sharing them with others

which is summed up by the “open source” initiative. Finally DevOps means people

working together to create better streamlined process, it has nothing to do

with tools they are simply facilitators of process. Tools come and go but great

processes and principles maintain the test of time. DevOps really is a no

brainer so please get on board and be part of the revolution, it has an open

door policy so it isn't too late so get on board, the ship hasn’t sailed yet.

Well explained .Keep sharing Devops Online Course

ReplyDelete